Every week, another AI tool promises to transform commerce. Teams evaluate AI-powered search. They demo dynamic pricing engines. They pilot product recommendation systems. They invest in the applications they can see — the ones with dashboards, demos, and quick ROI stories.

And then they wonder why their AI investments underperform.

The problem is not the tools. The problem is that most organizations build AI from the top down — investing in applications before they have the infrastructure to support them. They optimize the visible layer while ignoring the foundational ones

“Most organizations invest in the top. Winners design across the stack.”

That is the insight behind the AI Tech Stack for Commerce framework. It is not a vendor list or a feature comparison. It is a structural map of how AI actually works inside a commerce organization — and where the real leverage lives.

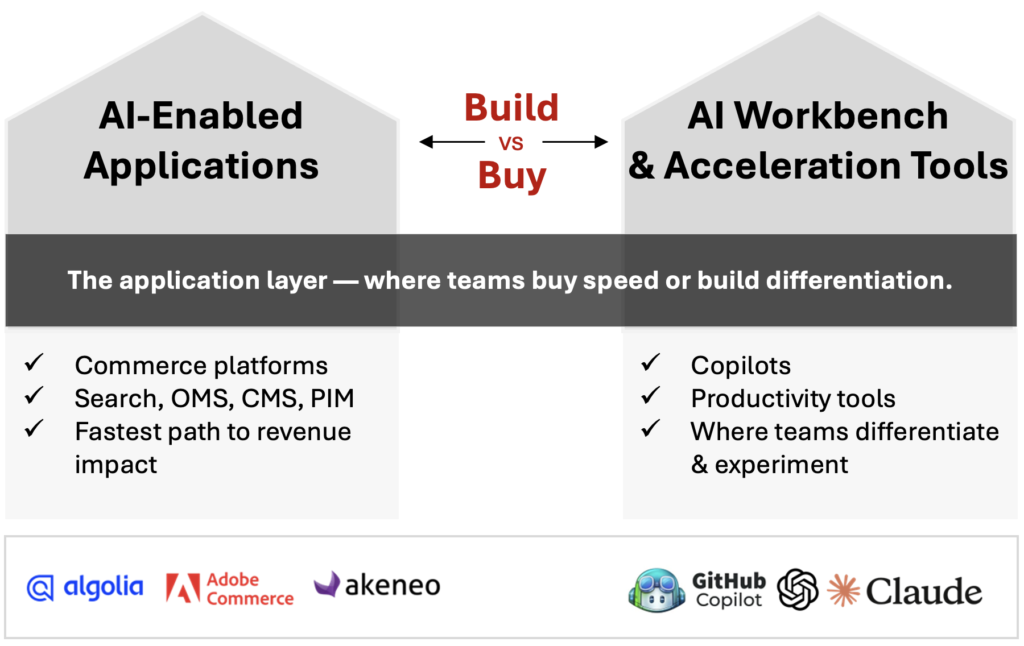

Layer 1: AI-Enabled Applications and Workbench Tools — The Build vs. Buy Decision

At the top of the stack sits the layer most organizations start with — and where the most important early strategic decision lives: build vs. buy.

On the buy side are AI-enabled applications: commerce platforms and SaaS tools that embed AI directly into workflows. AI-powered search and merchandising (Algolia, Adobe), personalization engines, pricing optimization tools, and order management systems with predictive capabilities. These are fast to deploy, easy to evaluate, and designed to show immediate value. For most capabilities at this layer, buy is the right default answer.

On the build side are AI workbench and acceleration tools: copilots for developers, merchandisers, and product managers — tools like Claude and GitHub Copilot that accelerate how teams work rather than replacing workflows wholesale. Experimentation frameworks. Prototype environments. The infrastructure for teams to develop proprietary capabilities that vendor roadmaps will never deliver.

The distinction matters because the two sides of this layer serve different purposes. Bought applications are the fastest path to revenue impact. Built workbench capabilities are where differentiation compounds over time — because the advantage is not the tool, it is what your team learns to do with it.

The risk most organizations take is defaulting entirely to buy. That works until a competitor builds something you cannot purchase.

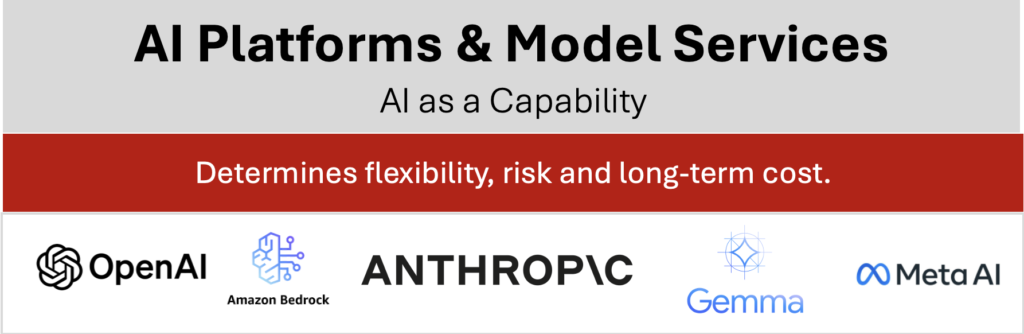

Layer 2: AI Platforms and Model Services — The Capability Determinant

Below the applications and the workbench sits the platform layer — the foundation models, fine-tuning infrastructure, and agent frameworks that determine what is actually possible at every layer above.

OpenAI, Anthropic, Google, Azure, Cohere. The choice of platform is not just a technical decision — it determines flexibility, risk, and long-term cost structure. Organizations that treat this layer as a commodity procurement decision consistently underestimate its strategic importance.

The right platform choice depends on three things: where differentiation matters (commodity capabilities can use shared APIs; proprietary advantages may justify fine-tuning), what governance requirements apply (data residency, compliance, auditability), and how much the cost structure scales as usage grows.

Most organizations in 2026 are using multiple providers across different use cases. The risk is fragmentation — building on platforms that do not interoperate well creates integration debt that slows every future AI initiative.

Layer 3: Cloud, Data, and Compute Infrastructure — The Layer That Determines Everything Else

At the foundation sits the layer that most commerce executives never see in demos but determines the outcome of every AI investment above it: cloud infrastructure, data platforms, security, and compute.

AWS, Microsoft Azure, Google Cloud, Snowflake, Databricks. These are not exciting tools to evaluate. But they are the reason Walmart can run AI across thousands of stores simultaneously while a competitor with the same application layer cannot.

The hard truth: for many organizations, 40 to 60 percent of total AI investment in the first two years goes here — establishing data pipelines, implementing governance frameworks, creating unified schemas that let models see across ERP, CRM, WMS, and marketing systems. This work does not show up in demos. It shows up in results twelve months later.

Organizations that skip this layer — or underfund it — find that every AI initiative above it becomes a custom integration project. Speed slows. Costs rise. Models underperform because the data they depend on is fragmented, stale, or untrustworthy.

The Next Layer: Agentic AI

The stack described above reflects where most commerce organizations are investing today. But the trajectory is clear — and it changes the calculus on every layer beneath it.

Agentic AI is the shift from systems that respond to systems that act. For most of the past decade, AI in commerce meant powerful but passive tools — recommendation engines that suggested products, fraud systems that flagged transactions, copilots that drafted content. Humans made the final call on everything consequential.

That is changing. When AI systems gain the ability to plan across multiple steps, call external tools, and adapt their approach based on outcomes — without waiting for human instruction at each stage — the stack stops being a set of tools you operate and starts being a set of systems that operate alongside you.

In commerce, the early signals are already visible. Autonomous agents that manage replenishment workflows, dynamically adjusting orders based on live inventory signals and demand forecasts. Agentic customer service systems that resolve complex multi-step issues — warranty lookups, order modifications, substitutions — without escalation. Merchandising agents that test and deploy content variants continuously, learning from outcomes rather than waiting for a campaign cycle.

The implication for the stack is significant. Agentic systems don’t replace any of the layers below them — they make the quality of those layers matter more. An agent operating across your commerce workflows is only as reliable as the data it reads, only as fast as the infrastructure it runs on, and only as safe as the governance built around it.

Which means the organizations building strong foundations now — clean data, solid MLOps, deliberate platform choices — are not just preparing for today’s AI applications. They are building the substrate that agentic systems will depend on.

The stack is not finished. It is still being written.

Designing Across the Stack: Where to Start

The practical implication of this framework is not that every organization needs to invest equally across all four layers. It is that every organization needs to understand which layers they have and which they are missing — before they invest more in the top.

A few diagnostic questions worth asking:

- Do your AI-enabled applications have reliable, governed data to learn from — or are they training on fragmented inputs?

- Does your team have the workbench tools to experiment and differentiate — or are they dependent entirely on vendor roadmaps?

- Have you made deliberate platform choices that support your governance requirements and scale economics?

- Is your data infrastructure designed for learning, or for recording transactions?

The organizations winning with AI in commerce are not those with the most tools. They are those with the clearest view of how the layers connect — and the discipline to invest across all of them.

Related Articles

Turn Insight Into Impact.

Start Today.